Self-hosted AI coding data boundary

MergeLoom is designed around a customer-hosted worker boundary for self-hosted AI coding.

The worker handles the sensitive execution path. The control plane coordinates the workflow.

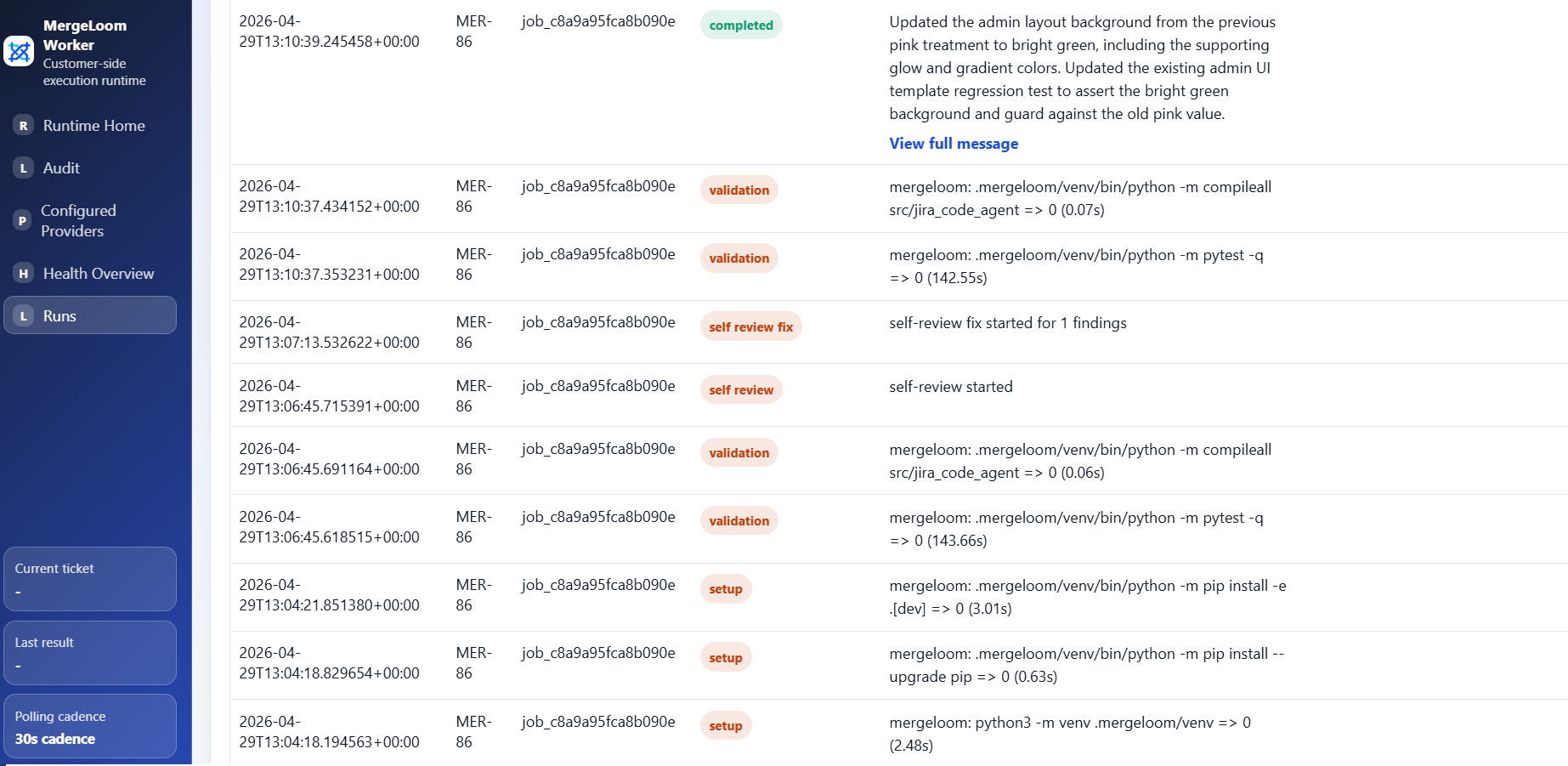

Worker-side execution

Section titled “Worker-side execution”The worker performs:

- repository checkout

- context assembly

- AI execution path

- command execution

- validation

- repair attempts

- branch preparation

- local live streams and deeper run traces

This means the most sensitive operational activity happens inside your environment.

Control-plane coordination

Section titled “Control-plane coordination”The control plane coordinates:

- tenant/workspace setup

- integration connection state

- repository catalog metadata

- workflow rules

- job envelopes

- worker enrollment

- review request creation/update coordination

- visible run state

The control plane should not be treated as the place where repository execution happens.

Model calls

Section titled “Model calls”Model calls are made from the worker path.

That matters because your provider choice and network policy can be aligned with your own infrastructure rules.

Provider readiness and credentials are managed in the local worker UI, usually

http://127.0.0.1:8010/ after installation. If the worker is on a VPS, open

the worker UI through an SSH tunnel to port 8010.

Examples:

- use Codex CLI from the worker container

- use Claude Code CLI from the worker container

- point the worker at a private OpenAI-compatible endpoint

- use approved cloud provider endpoints from inside your network

What to review with security

Section titled “What to review with security”Before rollout, review:

- where the worker runs

- which repositories the worker can access

- which commands the worker can run

- which provider endpoint the worker can call

- which secrets are mounted into the worker

- who can change provider and runtime settings

- how long worker-local traces are retained

- how PR/MR output is approved

For Kubernetes installs, prefer secret.existingSecretName and a

cluster-managed Secret for enrollment tokens and provider API keys. Mount only

the keys the worker needs, such as JCA_WORKER_ENROLLMENT_TOKEN,

JCA_OPENAI_API_KEY, or provider-specific cloud credentials.

For cloud AI providers, prefer workload identity or IAM roles instead of static keys when possible. That means GKE Workload Identity Federation for Vertex AI, EKS IRSA or another AWS role source for Bedrock, and AKS Workload Identity or managed identity for Azure Foundry.

Practical rollout advice

Section titled “Practical rollout advice”For a first security review:

- Use a non-production repository.

- Use one worker.

- Use one provider.

- Use explicit allowed commands.

- Use clear validation commands.

- Keep human review required before merge.

- Confirm logs and traces contain what your reviewers need.